Independent Components Analysis (ICA) – A Perspective

Comments on Independent Components Analysis (ICA)

We have heard dialog and arguments related to the subject of ICA for several years. I am sure most if not all readers are familiar with some of the statements, publications, and opinions expressed. I have historically stayed out of this matter, as I expected that readers would be able to figure out for themselves what is being said and what it means. Unfortunately, this has not turned out to be the case. I still hear questions asking for basic understanding, in the form of “Is he right?” “Is it bad?” “Why would they say that”, “So and so says,”, and so on. Most of these are unrelated to any facts or data but put it in the form of a popularity contest or a basis for ad hominim arguments “They should know, they did this and that.”

I will attempt to separate opinions from facts as I understand them. To state that any method is intrinsically wrong or invalid is a bit like saying “soy sauce is bad because someone put it on my ice cream.” If ICA is misused, then that is a clear matter to understand and avoid. But the misuse of a tool does not invalidate the tool itself.

What is being overlooked is that this subject simply relates to one of many possible mathematical techniques for understanding data. It happens to be a very good technique with a lot of benefits. It also has pitfalls, and if these are not avoided, you can surely produce “junk” results, simply by misuse.

What is ICA?

ICA is a mathematical method that is intended to identify and quantify the electrical signals that can be associated with an identifiable dipole source, in signals that are assumed to be a combination of many dipoles. It is an “iterative” process, which means that it goes through successive approximations of the answer, until the result “converges” to a final answer. The result is a set of new signals, each of which is statistically related to a particular source dipoles. For mathematical reasons, the number of ICA components that can be identified is no greater than the number of channels. This is intuitive. If you assume there is only one dipole, you could record it with one channel. ICA comes into play when you have more than 1 channel, and you want to estimate more than 1 dipole source.

But if there are two, then one channel can’t “take them apart,” but 2 channels can. No matter where you put those 2 sensors, an iterative mathematical procedure can find the ideal combination of the two, so one component reflects one dipole, and the other reflects the second dipole. Both components are based on both channels, so you now have two “virtual” channels that are components. There is a sound mathematical reason (“orthogonality of vectors”) that, no matter where those dipoles are, if they are even slightly different, then any 2 channels you place can pull them apart. What will change is the coefficients used for each channel, but there will always be a solution. Depending on where the sensors are, one of the dipoles will seem to be “the big one” and the other will appear smaller, generally. But the algorithm will always find them.

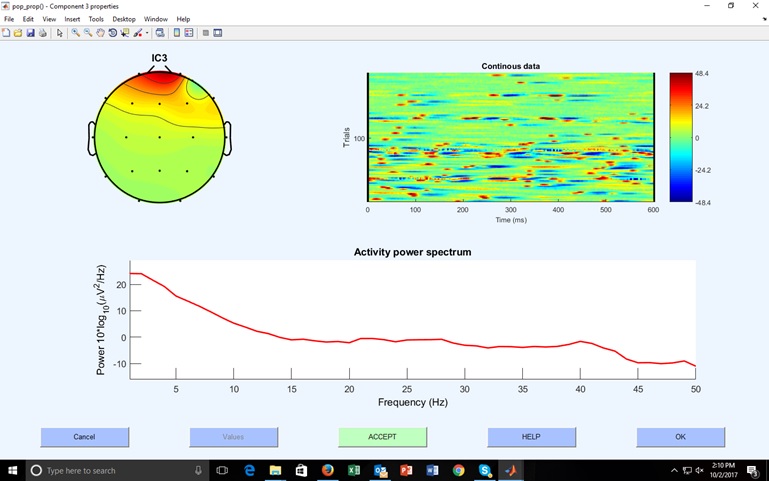

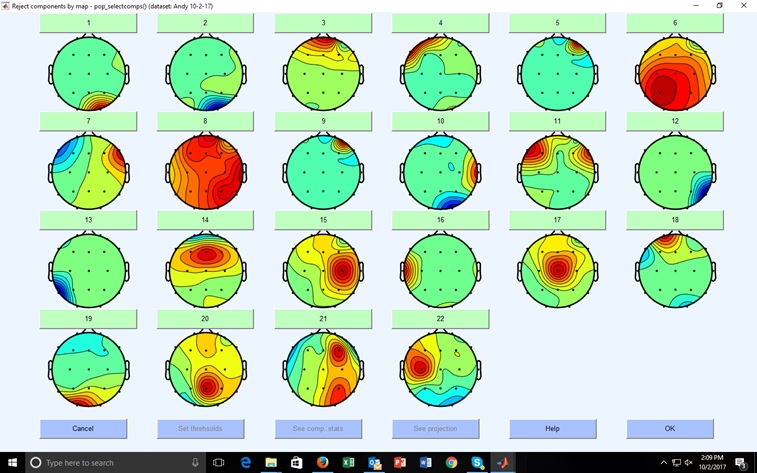

Extending this reasoning to more channels, you can understand why 19 surface channels should be able to identify 19 possible dipoles. It also follows that if there are actually less than 19 dipoles, then the following component estimates will be very small, reflecting only some residual “noise.” In reality, an ICA decomposition of an EEG typically contains at least one very clear eyeblink-related component, possibly other eye-related components (lateral eye movements, eye rolls, micro-saccades, and other stereotypical eye activity. In addition, one or more alpha sources typically arise, along with other posterior rhythms, medial and frontal alpha sources, etc. It is possible to identify the eye-related and other non-brain components, and instruct the software to remove them. It is important to note that the choice of components to remove is a matter either for an experienced operator, or for an artificial intelligence algorithm that has been trained to recognize components for what they are.

Possible source dipoles abound in EEG. They include true generators of rhythms such as alpha, theta, SMR, etc., other brain sources which may include focal rhythms, biological dipoles such as the eyes, localized noise sources, even muscle activity. What the ICA identifies is determined by the statistical properties of the EEG, so that the most predominant dipoles are found first, followed by lesser ones.

Using the ICA to analyze the EEG, which is its intended purpose (ICA is “Independent Components Analysis”) is an informative and useful tool. There is no inherent danger in looking at a signal in terms of its components, and an ICA type tool is a useful addition to any EEG (not even QEEG) platform.

The issue arises when one attempts to identify the components that one does not want and removes them mathematically from the signal. Many platforms allow this user-controlled process, which is not strictly part of “Independent Components Analysis,” but falls within a broader range of “Altering the signal by reconstructing it based upon ICA data, by mathematically subtracting that component from the entire data recording.” When an ICA component is removed from the original signal, every single data point is affected, since the dipole is assumed to be present all the time, but with a varying signal. If the removed components are attributed to sources such as eye rolls, eye blinks, micro-saccades, etc., then a cleaner EEG results, free of these sources. This is very useful for “harvesting” good epochs when doing Evoked Potentials, and this a standard part of many ERP platforms. It is worth noting that, as far as phase is concerned, when the ICA is properly used to remove non-brain dipole sources, the resulting changes in phase should be regarded as actually correcting the phase, not distorting it. Surely, the phase relationships in an artifact-reduced EEG would be more likely to be correct, when the eyes and such have been removed using a sound mathematical procedure.

However, if this altered EEG is then subjected to further processing that assumes that ICA removal was not performed, misleading results can occur. For example, if a database has been constructed with the presence of eye and other non-EEG dipoles in the signal, it will assume that those components are present. Even removal of eyeblinks and other artifact will not remove the micro-saccades, slight eye rolls, and other eye-related “noise” that is present throughout the recording.

Another useful application of ICA is to use it to remove clearly artifactual sources from every EEG sample, and construct the database from signals free of these signals. This is a valid procedure, and as long as the method used to classify and remove components is made clear, the database would be perfectly useful. However, using this database to process EEG that has not been ICA cleaned would produce misleading results, most notably the appearance of excess activity in the locations and ranges that the eye and other non-brain sources are generating signals.

Both of these applications reflect the fact that if one uses ICA along with component removal to produce a “cleaned” EEG, that signal should only be used for purposes that are consistent. It follows that if one processes an EEG in this manner, then subjects it to an analysis based on non-cleaned EEG, misleading results may occur, including phase and even power abnormalities that are not really present. The process would in this case be regarded as having produced a contaminated EEG. Similar problems have occurred when other operations are applied without sufficient care. It is a fact, for example, that if you read EEG into one program, filter it in some way, resave it, and put it back into that program or another program you can produce artifactual excesses or deficits purely by filtering. ICA has a similar effect, only one more complex than filtering, and one that requires judgment to determine which components to remove.

In summary, ICA is neither bad nor good, nor is it right or wrong. It is one more technique for digital analysis of signals arising from multiple dipoles, particularly EEG. When used properly it is a valuable and informative tool. You don’t have to use it if you don’t want to, you can look at your data without it. However, if you use it to analyze, and particularly to reprocess EEG, you have to follow the logical process. Cleaning an EEG with ICA and then running it though any database that does not assume ICA cleaning, is guaranteed to produce some inaccuracies. When misused in this way, errors can occur that manifest and phase and amplitude abnormalities that are not real.

In my personal opinion, because ICA is a postprocessing method that cannot be used in real time, I am less inclined to adopt it. I am personally interested in methods that can be used in real-time, such as z-builder or live z-score feedback including sLORETA and eLORETA connectivity and boundary imaging. The value of ICA in QEEG analysis is not, to me, compelling enough to require its use. There is no evidence that eschewing ICA in a QEEG environment is a particular problem. Secondly, when used to harvest epochs for event-related potentials, again, it is a postprocessing method that requires EEGLab or a similar program, which has to be separately used, or it has to be built into the ERP system. Again, if the benefit is one of harvesting, not improving results, we can do ERP work without it. As a result, I find the controversy and dialog over ICA to make more of a simple issue, aside from the clear understanding that you should not mix incompatible methods in any case.

This has not considered other valuable uses of ICA, for example in docomposing event-related potentials into dipole sources. When used in this way, ICA provides yet a deeper representation of the underlying EEG mechanisms. Clearly there is more to ICA than meets the eye, and it has an important role today and in the future.

copyright 2021 Thomas F. Collura and BrainMaster Technologies, Inc.